AI agent vs chatbot: how to tell the difference before you buy

14 min read

—

You invested in AI. You deployed chatbots. You added copilots. And your resolution rate barely moved. You deflected some FAQs and cut a little handle time – but the hard work still landed with your agents.

That's not a failure of AI. That's what happens when a chatbot is sold as an agent – and when a search bar is mistaken for a true AI teammate.

Whether you're comparing AI agents and chatbots for the first time or re-evaluating tools you've already deployed, this article gives you three things: the real differences, a framework for where your tools actually sit, and a five-question test to run in your next vendor demo.

TL;DR

- Chatbots handle conversations. AI agents handle work. That's the only distinction that matters.

- The AI agent vs chatbot difference is architectural. Chatbots are read-only. AI agents read, write and act.

- Five dimensions separate them for real:

1. Understanding,

2. Action,

3. Memory,

4. Reasoning, and

5. Learning. - Most tools sold as "AI agents" in 2026 are still retrieval systems – Level 2 on a 4-level maturity spectrum.

- The agent-washing test at the end gives you five PASS/FAIL questions to verify any vendor's claims in under 20 minutes.

AI agent vs chatbot – the 30-second answer

A chatbot matches your question to a pre-written FAQ answer or a knowledge-base article. An AI agent understands the context behind your question, reasons across connected systems, and takes action to resolve the issue.

One column deflects. The other resolves.

The agent-washing problem – why most "AI agents" are still chatbots

Here's a number worth knowing before you evaluate anything: Gartner found that of the thousands of vendors calling their product an "AI agent," only approximately 130 are verifiably agentic by any meaningful architectural standard.

That gap is agent-washing.

Agent-washing is when a vendor takes a retrieval tool, adds a conversational interface, calls it an agent, and ships it. The product looks agentic in a demo. It answers questions fluently. It might even pull data from your CRM. But it can't take an action, enforce a permission, or close a loop without a human finishing the job.

The business cost of getting this wrong is quantifiable – it's what we call the chatbot failure tax:

- 90% of customers have to repeat information to a chatbot because it has no memory of previous interactions

- 45% abandon after just 3 failed interactions

- RAG-based bots resolve only 10-20% of support tickets end-to-end

Source: Forethought.ai

That's not an AI problem. That's an architecture problem. The chatbot was never designed to finish work – it was designed to answer questions. Those are fundamentally different jobs.

This article gives you the framework to tell them apart in any vendor demo. If you want to go deeper on what makes an AI agent truly agentic, start there.

Five dimensions where AI agents and chatbots actually differ

Most AI agent vs chatbot comparisons focus on surface features – NLP quality, integration count, response speed. Those matter, but they don't tell you whether the tool will actually resolve work.

The five differences below are architectural. You can't close the gap with a better prompt or a new integration. The system either has these capabilities, or it doesn't.

- Understanding vs pattern matching

- Action vs conversation

- Memory vs amnesia

- Reasoning vs scripting

- Learning vs static

1) Understanding vs pattern matching

A chatbot sees text and looks for patterns. It matches keywords to the most relevant document or script in its index. If the match is good, the answer looks right. If the match is off – or if the context lives outside the document – the answer hallucinates or misses entirely.

An AI agent doesn't search for text. It traverses a knowledge graph to understand the entity behind the question: who the customer is, what product they're on, what version they're running, and what's already been tried.

The difference shows up the moment a question gets contextual.

Scenario: A customer contacts support and says, "My export isn't working."

Chatbot: Returns a generic FAQ article about the export feature.

AI agent: Traces the query through the knowledge graph: customer → enterprise plan → Data Export v3.2 → known bug introduced in the latest release → fix shipping in v3.3. Routes the ticket to engineering with full context attached and a suggested customer message that sets the right expectation.

Same question. Completely different outcome.

Computer by DevRev, is built around a fundamentally different data model. Rather than searching a flat document index, it uses Computer Memory – a knowledge graph that maps the relationships between customers, products, tickets, and code.

When a customer asks a question, Computer doesn't look for the closest match. It traces the entity relationships behind the question: who this customer is, what product they're on, what's already been tried, and what the right next step actually is. That's what makes it a reasoning system, not a retrieval one.

2) Action vs conversation

This is where most "AI agents" reveal themselves.

A chatbot's output is always conversational. It generates a response. It suggests what the user should do next. At best, it drafts a message for a human to review and send. The loop doesn't close until a person closes it.

A real AI agent executes. It processes the refund. It creates the Jira ticket. It updates the CRM. It Slacks the on-call engineer. From a single interaction, the system takes governed actions across multiple tools without a human in the middle.

If an agent can't write back to your systems, it's just a glorified search bar.

That's not a provocative take. It's a technical baseline. Read-only tools are retrieval tools, regardless of what they're called. The AI agent vs chatbot difference in this dimension is binary: does it act, or does it advise?

The resolution data make this concrete. Chatbots and RAG-based tools resolve 10-20% of support interactions end-to-end. Genuine reasoning agents resolve 40-60%+.

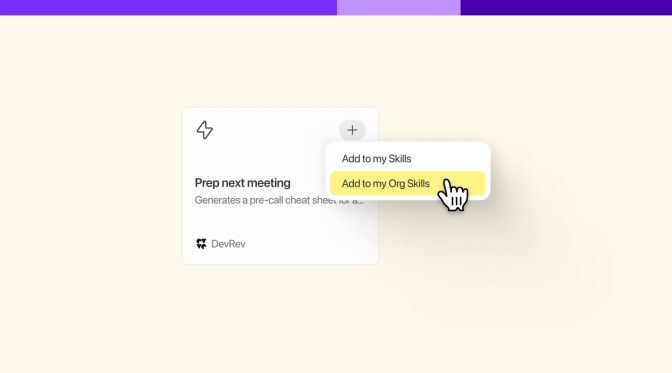

Computer AirSync maintains real-time, two-way sync with all your tools – CRM, ticketing systems, Slack, email, and product data. Unlike one-way integrations that only pull data in, Computer can write back.

When Computer helps process a refund or creates an engineering ticket, that action is reflected across every connected system immediately. Every action runs through permission checks and is fully auditable – so your team knows exactly what was done, when, and why.

The result: not just answers your team can trust, but actions they can stand behind.

3) Memory vs amnesia

Ask a chatbot the same question twice – in two separate sessions – and you'll get the same answer as if you'd never spoken before. Every conversation starts from zero. That's not a design flaw. It's how most of these systems were built.

This is why 90% of customers have to repeat their information every time they contact support. Not because they're impatient – because the tool has no memory of them.

A real AI agent holds two layers of memory:

- Short-term: everything from the current conversation – context, signals, decisions made so far

- Long-term: the full customer history across every system – CRM records, past tickets, product usage, previous escalations, conversations with sales

That second layer is what changes the quality of every interaction. A support agent who knows a customer has had three unresolved tickets in the last 30 days responds differently than one who's meeting them for the first time. AI should work the same way.

Computer Memory is organizational memory – not just chat history. Every interaction feeds back into a living customer record that every agent and team member can access the moment they need it.

In practice, when a customer contacts support, Computer doesn't start from scratch. It already knows they've had three unresolved tickets in the last 30 days, that they're on an enterprise plan, and that the issue they're describing matches a pattern seen across two other accounts this week.

The support agent – or Computer itself – can respond with full context from the first message. That's what Team Intelligence looks like: the whole team gets smarter with every interaction, not just the individual agent handling the ticket.

4) Reasoning vs scripting

A chatbot follows a decision tree or retrieves the closest document. It works well for single-step, isolated questions where the answer lives in one place.

It falls apart when the problem requires connecting multiple signals across multiple systems.

Scenario: 47 support tickets come in from different customers, all reporting a broken feature.

Chatbot: Gives 47 separate FAQ answers. Each customer gets told to clear their cache or check their settings. The bug is never surfaced to engineering.

AI agent: Detects the pattern across all 47 tickets, groups them into a single incident, identifies the root cause, and routes the incident to engineering with a full impact summary – which customers are affected, which plans they're on, and what the business exposure is.

One closes individual tickets. The other prevents the next 47.

This is the support-to-engineering alignment that the self-learning AI agents vs rule-based chatbots conversation tends to miss. The architectural difference isn't just about chat quality, it's about whether the system can reason across data that spans teams and tools.

When those 47 tickets arrive, Computer Memory doesn't treat them as 47 separate conversations. It connects the dots across tickets, customer profiles, product areas, and engineering history to surface the pattern as a single, structured signal.

Computer AirSync ensures that the signal carries full context: which customers are affected, what plans they're on, and what the business exposure looks like. Computer then routes the incident directly to engineering – not as a raw ticket dump, but as an actionable summary with everything the team needs to investigate and resolve.

5) Learning vs static

Deploy a chatbot today. Come back in six months. You'll find the same chatbot – same answers, same gaps, same failure modes – unless someone on your team manually updated the content and decision trees in the meantime.

That's the static model. It doesn't improve unless a human improves it.

A real AI agent learns from every interaction. New edge cases get incorporated. Resolution patterns get recognized. Product changes get reflected in the knowledge graph automatically. The system that handles your support queue in December is meaningfully better than the one you deployed in June.

The compounding effect of this distinction is significant. Gartner projects that 80% of autonomous resolution is achievable by 2029 – but only with agents that learn from interactions, not static retrieval systems that require manual maintenance.

Self-learning AI agents vs rule-based chatbots aren't just different tools. They're different trajectories. One gets more expensive to maintain over time. The other gets better.

Computer Agent Studio lets teams build, inspect, and improve agent behaviour. Combined with Computer Memory, every resolution feeds back into the system – making the next interaction sharper, faster, and more accurate.

Recommended read: The future of custom AI agents

From script to autonomous – four levels of AI in customer support

There are four distinct levels of AI capability in customer support, and the differences between chatbots and agentic systems map directly to this spectrum.

Most tools currently marketed as AI agents sit at Level 2. They've added an LLM on top of a retrieval system, which makes them better at generating answers, but doesn't change the underlying limitation: they can't traverse relationships, they can't take actions, and they can't learn.

The jump from Level 2 to Level 3 isn't a software update. It's a data architecture decision. Level 3 and 4 capabilities require a unified data layer that connects entities across systems – accounts, tickets, people, products – and lets an agent reason over those relationships, not just search them.

Chatbot vs AI agent – when to use which

Here's the honest answer: chatbots aren't dead. They're just often deployed in the wrong context.

Chatbots are the right choice when:

- The questions are simple, high-volume, and highly repetitive

- No system access or action is required to resolve them

- The stakes are low, and the scope is narrow (returns FAQ, password reset instructions, office hours)

AI agents are the right choice when:

- The workflow touches more than one system

- Resolution requires an action, not just an answer

- The interaction is revenue-critical: renewals, escalations, onboarding, refunds, contract changes

- Context from previous interactions materially changes what the right response looks like

- The business needs to scale support capacity without a proportional increase in headcount

A useful decision matrix: multiply the complexity of the question by the action required by the number of systems involved. Low on all three? A chatbot works fine. High on any one of them? You need an agent.

The mistake most teams make isn't deploying chatbots. It's deploying chatbots for Level 3 problems and then being surprised when the resolution rate plateaus at 15%.

What happens when you deploy a true AI agent

The proof isn't theoretical.

Industry results:

- 97% reduction in first response time (industry aggregate, agentic AI deployments)

- 47% reduction in average resolution time - driven by context, not just speed (industry aggregate)

- ServiceNow: 80% autonomous resolution rate on internal IT support cases

- Klarna: $40M in annualised customer service cost savings after deploying agentic AI

Proof points from DevRev’s customers:

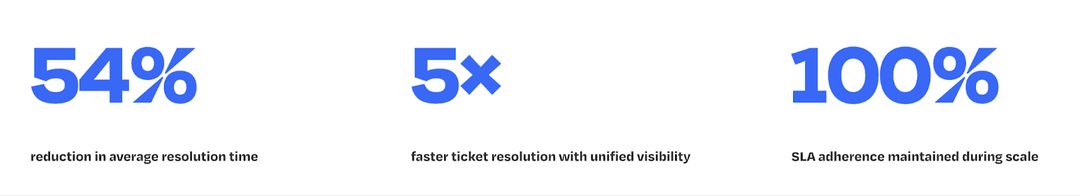

- Bolt saw 40% faster ticket resolution after deploying Computer across their support team giving them full context behind every ticket

- Descope reduced resolution time by 54%

- Deepdub reached a 66% automation rate

These aren't chatbot numbers. Chatbots don't move metrics like this because they're not designed to resolve – they're designed to respond.

Sales, support, and customer success teams don't need another tool layered on top of the tools they already have. They need an AI teammate that actually finishes the work: closes the ticket, updates the CRM, surfaces the churn risk, and processes the refund. That's what moves the numbers above.

The agent-washing test – five questions to expose fake AI agents

Before you buy any AI agent, run these 5 questions in your next vendor demo. Each one has a clear pass and a clear fail. No partial credit.

Question 1: "Process a refund end-to-end. No human steps."

✅ Pass: The agent reads the customer record, validates the refund policy, processes the refund in the billing system, updates the CRM, and notifies the customer – with human-in-the-loop controls available for high-risk operations. You decide when AI acts immediately and when it asks for approval.

❌ Fail: The agent drafts an email with refund instructions and waits for a human to action it.

Question 2: "Which data structure powers your AI – vector DB, RAG, or knowledge graph?"

✅ Pass: Knowledge graph with entity relationships – accounts connected to tickets, tickets connected to products, products connected to known bugs, and owners.

❌ Fail: "RAG" or a vague answer about "advanced retrieval." RAG is retrieval, not reasoning.

Question 3: "Show me your memory layer. What does it know about a customer after 6 months?"

✅ Pass: Persistent, cross-system memory – full history of interactions, tickets, product usage, team notes, escalations, and relationship signals, all accessible in a single query.

❌ Fail: Conversation-level memory only, or "we store your chat history." That's not a memory layer. That's a log file.

Question 4: "Who owns the API service from last week's outage? Trace it."

✅ Pass: Agent traverses the incident → affected service → service owner via the knowledge graph. Shows the reasoning path and the data sources used.

❌ Fail: Returns a document. Guesses a name. Can't show how it got there.

Question 5: "As a junior support rep, what happens if I ask for executive compensation data?"

✅ Pass: Blocked at the data layer. The graph-level RBAC prevents the data from being retrieved entirely, regardless of how the question is phrased.

❌ Fail: Blocked via a prompt filter that says, "I'm not allowed to answer that." Prompt filters are jailbreakable. Ask the same question differently, and the data surfaces.

Computer passes all five. Not because these questions were written for it – but because architecture-first AI has to pass them to function safely at enterprise scale.

Bring it to your next vendor demo. Score every answer.

Stop chatting. Start resolving.

Your customers don't want to chat. They want their problem solved.

The AI agent vs chatbot question isn't really about technology. It's about what you're willing to accept as a resolution. A chatbot resolves the conversation. An AI agent resolves the problem.

The difference between the two is whether the system can reason about context, act across your tools, and close the loop without someone doing it manually. Every day you run a Level 2 system on Level 3 problems, you're paying the chatbot failure tax – in support costs, in rep time, in customer churn, and in the quiet erosion of trust that comes when people have to explain themselves again and again to a system that was supposed to help.

AI has made a lot of things faster. The teams pulling ahead in 2026 are the ones who figured out that faster retrieval isn't the same as resolution – and that an agent that can't act is just a search bar in disguise.

Computer is built for what comes next: trusted answers and governed actions, not just faster retrieval.

See how Computer resolves a real ticket → Schedule your demo

Frequently Asked Questions

Related Articles

Anirudh Shenoy

Michael Machado

Akhil Kintali

Abhinav Singh

Computer+ Apps

Our customers

Resources

Initiatives